Author: Alexander Ivanyuk, Senior Director, Technology

AI is moving fast, and with that speed comes a new set of terms that many business readers are now hearing for the first time: RAG and MCP. They may sound technical, but the ideas behind them are actually practical. They describe how modern AI systems get better information, connect to business tools, and, in some cases, go beyond answering questions to carrying out work. That shift matters for managed service providers (MSPs) and small and medium IT deployments (SMDs) because customers are no longer asking only for a chatbot. They increasingly want AI that can answer using company documents, connect to systems like CRM or ticketing and help complete real tasks.

The industry is clearly moving in that direction. Google Cloud said in early 2025 that, for enterprise deployments, retrieval-augmented generation was becoming “nonnegotiable” because it helps close the gap between impressive AI demos and trustworthy real-world performance. Anthropic later said that MCP had grown to more than 10,000 active public servers and had been adopted by products including ChatGPT, Gemini, Microsoft Copilot and Visual Studio Code. Put together, those signals show where the market is heading: from simple answers to grounded answers, then to connected systems, and finally, to AI that can help do the job.

What is RAG?

RAG stands for retrieval-augmented generation. In plain language, it means the AI does not rely only on what it learned during training. Before answering, it first looks up relevant information from approved sources such as documents, knowledge bases, product manuals, policies or support records. Microsoft Azure’s overview of RAG defines it as a framework that retrieves relevant information from external sources to improve the quality, relevance, and reliability of generated answers.

A simple example helps. Imagine a support engineer asks, “Does this customer’s license include feature X?” A normal model might try to answer from general training and get it wrong. A RAG-enabled system can first check approved sources, such as licensing rules or current product documents, and then answer using that material. That is why RAG is becoming such a common pattern for business AI: it helps the model answer with the company’s actual information, not just its general memory.

For MSPs and SMDs, RAG matters because many customer use cases are really knowledge problems. Customers want faster answers from internal documentation, better support assistance, easier access to product information and more useful internal search. But RAG also raises an important question: Which information is the AI allowed to retrieve, and for whom? Once AI is connected to business knowledge, access control and data exposure start to matter much more.

What is MCP?

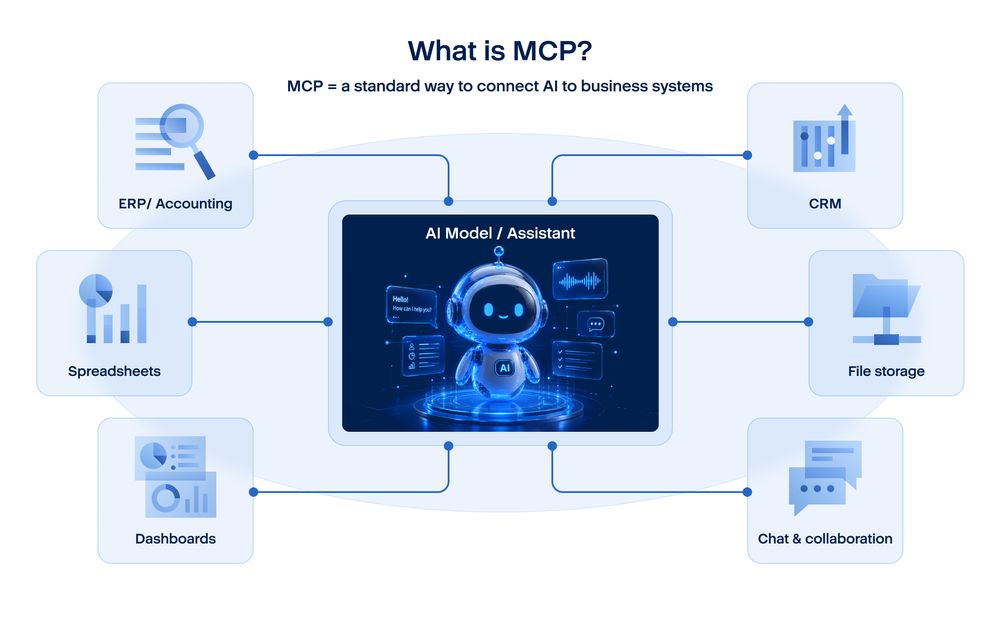

MCP stands for model context protocol. Anthropic’s MCP documentation describes it as an open protocol that standardizes how applications provide context to large language models. Anthropic also uses a helpful comparison: MCP is like a USB-C port for AI applications. That is a useful way to explain it, because the point of MCP is consistency. Instead of every AI connection being custom and different, MCP gives models a more standard way to connect to tools and data sources.

That matters because businesses do not want AI systems that live in isolation. They want AI that can work with the systems they already use: CRM, ticketing, file storage, chat, dashboards and other business software. Without a common connection model, every integration becomes a one-off project that is harder to maintain and harder to secure. OpenAI added support for remote MCP servers in its Responses API in May 2025, which shows that MCP is not just a niche experiment. It is becoming part of the wider AI tooling ecosystem.

There is also strong evidence that MCP adoption has accelerated quickly. In December 2025, Anthropic said there were more than 10,000 active public MCP servers, and that MCP had been adopted by ChatGPT, Gemini, Microsoft Copilot, and Visual Studio Code. That does not mean every MSP customer is already using MCP directly. But it does mean the protocol is becoming an important part of how connected AI is being built across the industry.

For MSPs, the relevance is straightforward. The more AI connects to real systems, the more customers need help answering practical questions: Which systems can the AI reach? What permissions should it have? Which connectors are approved? How do you separate tenants correctly? How do you stop a useful connection from turning into a risky one? MCP is not the whole answer, but it is part of the new architecture that makes those questions unavoidable.

Why MSPs and SMDs should care

For MSPs, these are not abstract architecture terms. They describe the real environments customers are starting to build. A customer may begin with a simple request such as, “We want an AI assistant for support,” or “We want AI to help our team answer questions faster.” But once that request becomes serious, the next questions appear quickly. Where will the assistant get its answers? Which documents can it use? Can it connect to the CRM? Can it read ticket data? Can it update a case? Can it perform the next step automatically? That is already the world of RAG, MCP and agentic AI.

A lot of these questions come from one risk that deserves special attention: the rogue or untrusted MCP server. MCP is useful because it gives AI a standard way to connect to tools and data, but that also means the MCP server becomes part of the trust boundary. If a server is malicious, poorly designed, compromised or simply connected without proper review, it can expose much more than expected. It may present unsafe tools to the model, return manipulated content, request broader permissions than it really needs or create a path to sensitive systems and data. In practice, the danger is not only “bad answers.” It is unauthorized access, harmful actions, privilege escalation, data leakage and the kind of hidden tool misuse that is difficult to spot once AI is allowed to call external functions.

More broadly, MCP-related threats are really tool-governance threats. The business has to think about which MCP servers are trusted, which tools they expose, whether they are read-only or write-capable, what identities are allowed to use them and what happens if the model is manipulated into using them in the wrong way. This is one reason MCP changes the security conversation: once AI can connect to outside tools, the company is no longer only managing prompts and outputs. It is managing access and actions.

This is why MSPs should care early. If they wait until customers are deeply integrating AI into business systems, the conversation becomes harder. It is much better to explain it in simple terms from the beginning: what the AI can read, what it can connect to, and what it can do. Those are the questions that shape real-world security, governance, supportability and customer trust.

The same logic applies to small and medium deployments. Smaller organizations may think these topics only matter to large enterprises, but they often reach these questions faster than they expect. A small business may start with an assistant for internal use, then want it to read shared documents, then connect it to service records or customer data, and then ask whether it can help complete a task instead of just suggesting one. That is exactly how AI moves from a productivity experiment into an operational system.

The good news is that Acronis GenAI Protection helps with most of these concerns. Our approach is built around centralized GenAI management for applications, agents, and MCP servers, together with usage monitoring, DLP, prompt-injection protection, and a layered AI security engine. The current roadmap also explicitly includes Acronis DLP + MCP server protection, which fits this need directly. In practical terms, that means MSPs and customers get a way to govern which AI-connected tools are allowed, reduce the chance of sensitive data being sent where it should not go, detect harmful prompts that may try to manipulate model behavior, and keep visibility through centralized monitoring and reporting.

Conclusion

RAG, MCP, along with agentic AI are not just new labels. They describe the practical building blocks of modern AI systems. RAG helps AI answer with better and more current information. MCP helps AI connect to business systems in a more standard way. Agentic AI allows AI to move from answering to taking part in real work.

For MSPs and SMDs, Acronis GenAI Protection is the practical answer to these growing AI challenges. It is designed to help businesses make AI use more visible, more controlled and safer in everyday work by addressing the issues that matter most: shadow AI discovery, data-loss prevention, prompt-injection protection, denial-of-wallet monitoring, policy management for GenAI applications, agents and MCP servers, and a layered AI security approach. It also supports the broader controls businesses now need as AI becomes more connected to knowledge sources, business tools, and real workflows, including guardrails, content policy, tenant-aware access, RAG access control and tool-execution boundaries. For MSPs, that means a clearer way to help customers adopt AI without losing governance. For SMDs, it means being able to use AI productively without losing control over data, access or business processes.

About Acronis

A Swiss company founded in Singapore in 2003, Acronis has 15 offices worldwide and employees in 60+ countries. Acronis Cyber Platform is available in 26 languages in 150 countries and is used by over 21,000 service providers to protect over 750,000 businesses.