Generative AI is no longer a future topic that only big enterprises need to worry about. It is already part of daily work. People use it to write emails, summarize documents, search for answers, draft proposals, prepare reports and respond to customers faster.

That is one reason the conversation has changed so quickly. In the Stanford HAI 2025 AI Index Report, 78% of organizations said they were using AI in 2024, up from 55% the year before. The same report says 71% were already using generative AI in at least one business function, up from 33% in 2023. That tells us something important: GenAI is not a side experiment anymore. It is moving into normal business operations.

For managed service providers (MSPs) and small and medium IT deployments (SMDs), that matters because customers do not need a technical lecture on how models are built. They need a plain explanation of what GenAI really is, why it is spreading so fast and why it creates a new security question.

What is GenAI?

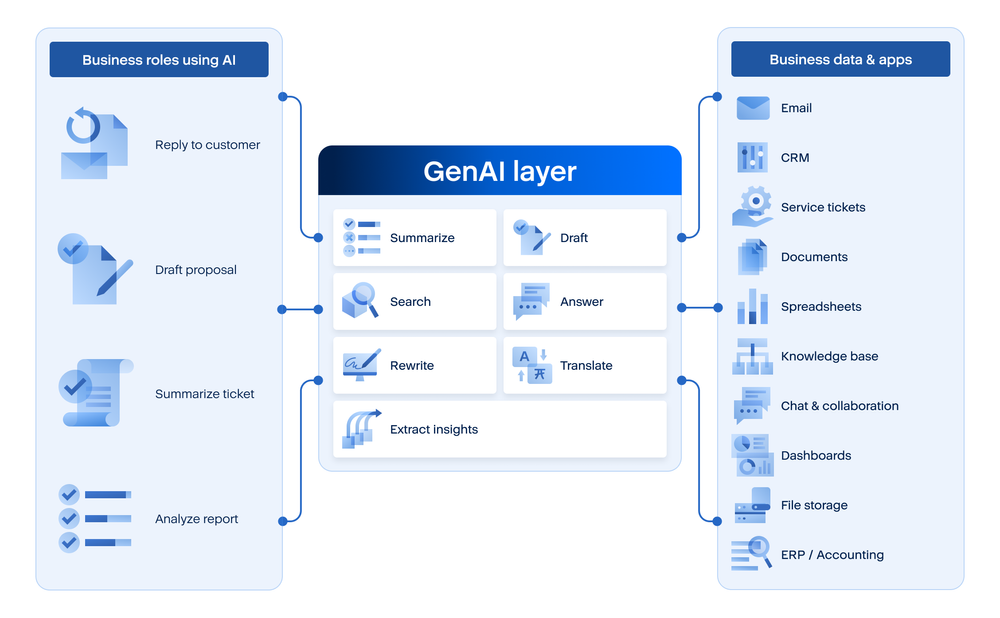

In simple language, GenAI is a type of AI that can create new content. It can write text, summarize information, suggest answers, rewrite a document, generate code, translate content, or help a user search through large amounts of material in a much more natural way.

That is what makes it different from traditional software. Normally, software asks people to work through menus, fields, and fixed steps. GenAI lets people ask for what they want in everyday language. A user can type, “summarize this meeting,” “draft a customer reply,” or “explain this report in simpler terms,” and the system produces a usable result.

A useful way to explain it to customers is to say that GenAI adds a new layer between people, business data and business applications. It helps users work with information faster, but it also changes how information flows through the company.

Many GenAI applications are powered by LLMs, or large language models. An LLM is the engine underneath the application that understands prompts and generates language-based output such as answers, summaries, drafts, code or recommendations. So when we talk about GenAI, it is often more precise to say “GenAI applications powered by LLMs.” That same LLM layer is also what makes agentic AI possible, because it helps the system interpret goals, reason through steps, and work with tools or connected data sources.

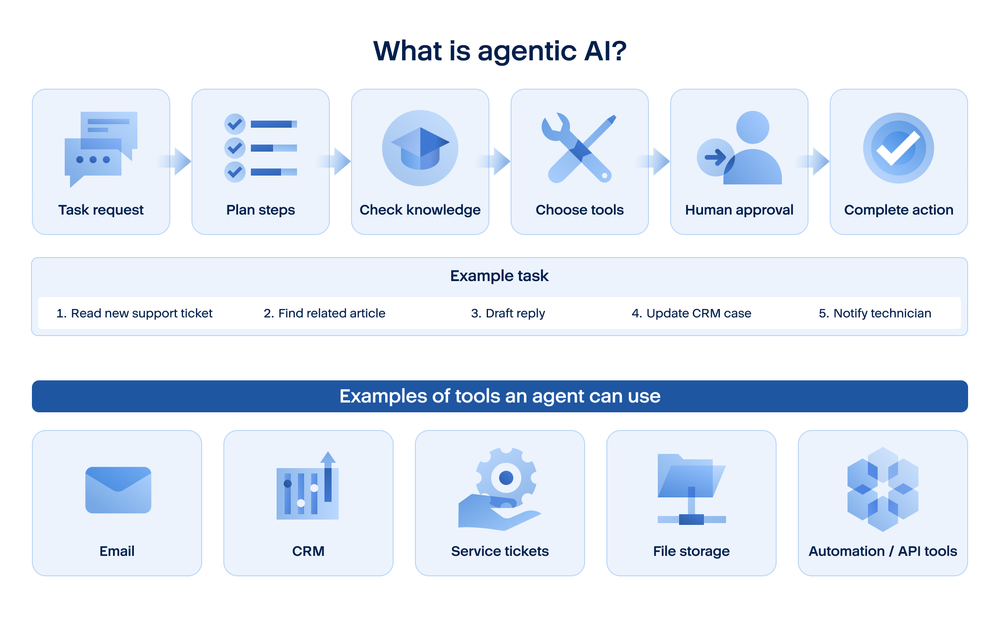

What is agentic AI?

Agentic AI is the next step. Instead of just answering one prompt at a time, an agentic system can work through a multistep task. OpenAI’s guide to building agents says agents are systems that independently accomplish tasks on behalf of users. In other words, an agent does not just reply. It can plan, use tools, bring in more context, and keep moving toward a goal.

A practical example makes this easier to picture. A normal AI assistant might summarize a support ticket. A RAG-enabled assistant might summarize the ticket and pull the right knowledge article. An agentic system might read the ticket, check the customer’s history in the CRM, look in the knowledge base for the likely fix, draft the reply, update the case and notify the technician — with human approval where needed. OpenAI’s ChatGPT agent materials describe this kind of agentic workflow as reasoning, researching and taking actions on a user’s behalf while pausing for clarification or confirmation when needed.

This is also where the security and governance discussion becomes much more serious. The more the AI can do, the more businesses need to think about boundaries. What tools can it use? Which actions are allowed automatically? When must a human stay in control? OpenAI’s ChatGPT agent safety guidance warns that agents can reach sensitive data and take actions on a user’s behalf, which is exactly why approvals, app controls and careful oversight matter.

Why GenAI is suddenly everywhere

The reason is not mysterious. GenAI saves time. It removes friction from common tasks. People do not have to learn a complicated tool before they see value, so adoption happens fast.

That speed is also being pushed by business pressure. The World Economic Forum’s Future of Jobs Report 2025 says technological change, including AI, is one of the biggest forces reshaping business and work. In parallel, Microsoft’s 2025 Work Trend Index reports that 82% of leaders expect to use digital labor to expand workforce capacity within the next 12 to 18 months. Businesses are not only interested in AI because it is new. They are interested because teams are under pressure to do more, faster.

This is why AI is not only a big-company story. Large enterprises may talk more publicly about AI programs, but smaller organizations feel the same pressure to save time, answer customers faster and get more value from limited staff. In some ways, smaller companies can be affected even sooner, because people often adopt useful tools before formal policy catches up.

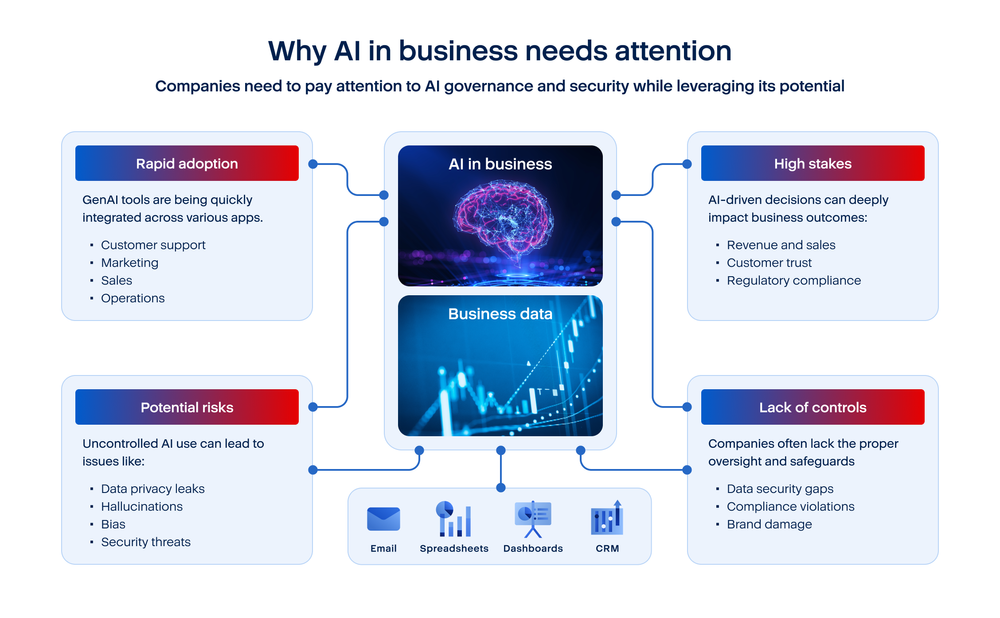

Why companies need to think about it more carefully

The value of GenAI is real, but that is only half of the story. The other half is that GenAI creates a new path for data, instructions and decisions. People may paste company information into prompts, upload internal files for summarization or rely on AI-generated answers that sound confident even when they are wrong.

That is why this should not be treated only as a productivity discussion. It is also a governance and security discussion. When employees use AI, companies need to think about what data is being shared, which tools are approved, who can use them and how outputs are checked before they affect customers, operations or internal decisions.

This is also how NIST’s Generative AI Profile frames the issue. NIST treats generative AI as a distinct risk-management challenge and notes that some risks are new while others are made worse by the way GenAI works. That is a useful business message: GenAI does not replace traditional security concerns, but it adds a new layer that companies have to understand and manage.

Why this is especially relevant for MSPs and SMDs

For MSPs, GenAI should be seen as a managed environment issue, not just a product feature. A customer may ask about a chatbot or an AI assistant, but the bigger question is what sits behind it. Which tools are already in use? Are staff using approved business accounts or personal accounts? What kinds of data are being entered? Is anyone checking what is safe and what is not?

Those are familiar service-provider questions. MSPs already help customers manage endpoints, identities, cloud apps, collaboration platforms, backup and security controls. AI now needs to be part of the same conversation, because it touches many of those areas at once.

For small and medium deployments, the challenge is usually not building advanced AI systems from scratch. The challenge is much more basic and much more immediate: informal adoption, limited visibility, unclear ownership, and weak policy. A smaller business may not have an AI team, but it can still have employees using AI every day in ways that affect data handling, quality, and security.

Why unmanaged use is the real problem

One of the clearest warning signs comes from a TELUS Digital survey published in February 2025. It found that nearly 68% of enterprise employees who use public GenAI at work do so through personal accounts, and 57% said they had entered sensitive or high-risk information into those tools. Those numbers matter because they show how AI use can grow outside normal company controls.

That is the real problem for many MSP customers and SMD environments. The issue is often not a carefully planned AI deployment. It is shadow use that happens quietly across browsers, productivity apps, support workflows, and personal accounts. By the time leadership starts asking about AI policy, staff may already be using several tools every week.

This is why the right first step is usually not to ban everything or to promise an AI transformation overnight. The right first step is to make AI use visible. Then companies can decide what is approved, what data should never be shared, where more training is needed and what controls should be added.

What customers need to hear in plain language

Most customers do not need abstract AI language. They need a simple explanation. GenAI is a new way to work with information through natural language. It can help people move faster, but it can also create new risks if it is used without visibility, policy and review.

That message works well for MSPs because it is balanced. It avoids hype, but it also avoids denial. AI can bring real value. At the same time, companies need to think about where it is being used, what data is flowing into it, how outputs are checked, and which controls should be in place.

For SMD customers, this is not about copying a large-enterprise AI program. It is about asking a few practical questions: Where are people already using AI? What is allowed? What should never be shared? Who is responsible for oversight? And how do we make sure speed does not come at the cost of security or trust?

Conclusion

GenAI matters because it changes how people create, search, summarize, and respond. That is why it is spreading so quickly across businesses of every size. But that same ease of use is also what makes it a new security question.

For MSPs and SMDs, the takeaway is straightforward. This is not only a story about the largest enterprises. It is a story about ordinary business work, smaller teams, faster adoption and the need for better visibility and control. The companies that do well with AI will not be the ones that talk about it the most. They will be the ones that understand it clearly and manage it sensibly.

Acronis addresses these concerns with Acronis GenAI Protection, a solution designed to help MSPs and their customers make AI use more visible, governable and safer in day-to-day work. In the materials you shared, Acronis positions this around the main problem areas businesses are now facing: Shadow AI discovery, data-loss prevention, prompt-injection protection, denial-of-wallet monitoring, policy management for GenAI applications, agents and MCP servers, and a layered AI security engine. The idea is simple: If AI is becoming part of normal business workflows, companies need controls that match that reality rather than relying only on older security methods.

About Acronis

A Swiss company founded in Singapore in 2003, Acronis has 15 offices worldwide and employees in 60+ countries. Acronis Cyber Platform is available in 26 languages in 150 countries and is used by over 21,000 service providers to protect over 750,000 businesses.